Researcher: Megan Barker

Position: Science Teaching and Learning Post-Doctoral Fellow with the Carl Wieman Science Education Initiative

Faculty: Science

Department: Biology

Year level: First

Number of students: 84

Problem addressed:

The amount of new technical vocabulary in undergraduate science courses is atrocious and overwhelming, and it complicates students’ understandings of key concepts.

Solution approach:

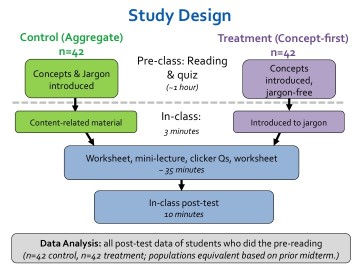

Students in a first-year undergraduate biology course were given a pre-class reading assignment that explained concepts with no technical language. Their understanding of these concepts were compared with a group of students in another section of the same course, whose reading contained the jargon and concepts. Both groups had the same classes and completed the same post-test.

Evaluation approach:

The results were interpreted in light of cognitive load theory, which holds that the cognitive load of recognizing the right term or recognizing the right concept is low relative to having to synthesize new ideas.

Main findings:

There was no difference in the multiple choice responses from either group. However, students who had the jargon-free pre-class reading had a higher number of correct explanations of the concepts.

Can you give some background on the research?

Megan Barker: I work with a first year biology course. Something that we have noticed is that the amount of new vocabulary in first year biology courses is atrocious and overwhelming. But we can’t just get rid of the jargon because we are preparing students to talk with scientists and be scientists, and it’s a jargon heavy field. So we were trying to see if there was a way for us to find a better way to teach jargon, without sacrificing the conceptual understanding that we think jargon sometimes gets in the way of.

What is the research question?

MB: The experiment was: Can we pull out the jargon and have the students’ initial exposure to the material be using only concepts in plain language? For one group in their pre-class readings, we took out all of the technical language as identified by the instructors and as identified by the textbook and replaced them with plain language, just explaining the concept. For example, Purine became large base and pyrimidine became small base. The other section we left the pre-reading as is. They both did the pre-reading and a pre-class quiz, and then came to class.

The first five minutes of class were a little different, because we had to introduce the jargon to the first group who hadn’t seen it at all, but aside from that the classes were the same. At the end of class we asked them to do a short post-test that included multiple choice questions, with the jargon and without the jargon, as well as some short answer, open response questions where they solved a problem or described a situation.

Then we compared the two groups to each other on that post test to see if including the jargon or not including the jargon on their pre-reading had affected their ability to do multiple choice questions and if it had affected their ability to answer the short answer questions in writing.

What did you find?

MB: We didn’t see any difference in the multiple choice responses from either group, so that says to us that our experimental variable didn’t have any impact on the students’ ability to recognize the correct jargon or the correct concept.

We did see a difference in their writing. We noticed that there was no difference between the two groups in their correct use of the jargon. We weren’t helping them do better on jargon but we were also not creating a problem for them. What we did notice was a higher number of correct explanations of the concepts in the group that we had removed the jargon. Pulling the jargon out helped them explain better. It helped them understand the concepts better.

A flowchart of the experimental design. Both the control and concepts-first groups had the same reading and quiz. The jargon was replaced in the concepts-first materials. In-class, students in the treatment group were briefly introduced to the jargon by reading. (from: http://onlinelibrary.wiley.com/doi/10.1002/bmb.20922/epdf)

How did you evaluate your findings?

MB: We interpreted the results in light of cognitive load theory. The cognitive load of recognizing the right term or recognizing the right concept is pretty low relative to having to synthesize new ideas. When we ask students to articulate, we noticed that we were just seeing more correct articulation from the students who had been in the treatment group. Maybe that’s because when students are seeing new jargon and new concepts and they’re also trying to write and articulate, there’s a lot going on. They are probably maxing out their cognitive load. One of the first things to go is the ability to articulate.

How has this study impacted teaching and learning?

MB: I happened to be the instructor for this experiment, so I read all of the open response questions. I was hearing the things I had said come out of their mouths. Some students picked up on a side comment and thought it was the main thing. Some totally misinterpreted the main thing that was being said. Now, I am a lot more careful and I try to be more streamlined in terms of the words I use and don’t use. They don’t need more jargon thrown at them. The more I can help them with that, the better.

We didn’t want to do an experiment that might tell us a lot about teaching, but then nobody can put into practice. We set out to do an intervention that would not require a lot of class time or massive changes to the way an entire curriculum looks. You take your pre-reading, you remove the vocabulary from it and then you dedicate a few minutes at the start of class to give them a list of the vocabulary. It’s straightforward.

How will this study impact future research?

MB: Like any good work, it asks more questions than it answers. Did we pick the right terms? Is it local to this type of unit? Would you see the same effect over the long term? Would you see the same effect in different disciplines or even on different days, with different students?

One of the things that we noticed was that the terms that we had chosen as jargon – that was not a student-led discussion. It was based on what the textbook author said and what the instructors had noticed was a problem for the students. So I wanted our selection of vocabulary to have been more student-driven, especially if we’re going to make it more applicable to wider sources.